Crowdsourcing and Colonoscopies: The Benefits of Distributed Knowledge for Cancer Diagnosis

Published Aug-26-13Breakthrough:

A medical study to help improve how specialists interpret CT scans in colorectal cancer diagnosis, illustrates the value of the crowd.

Company:

National Institutes of Health, United States

The Story:

In many industries computers and software programs do some of the heavy lifting and enhance the capabilities of workers. Medicine is just one of many areas to have benefited from smart technologies.

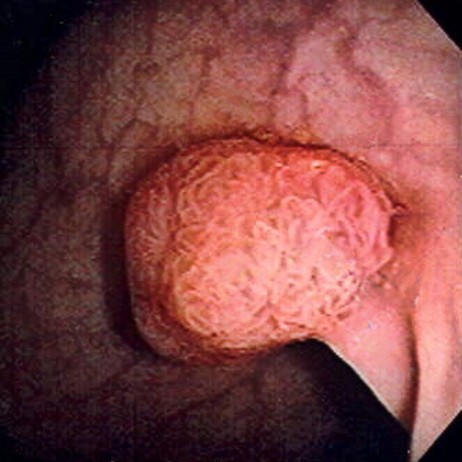

In many industries computers and software programs do some of the heavy lifting and enhance the capabilities of workers. Medicine is just one of many areas to have benefited from smart technologies. Colorectal cancer is a leading cause of cancer death and can be prevented by removing polyps (fleshy growths on the lining of the colon or rectum) before they become malignant. There are two ways to detect polyps: One is by direct examination with conventional colonoscopy in which a colonoscope is inserted into the rectum and guided to the large intestine. The other is by virtual colonoscopy in which a CT scanner creates two and three-dimensional images of the colon. However, errors can creep in.

Minimizing Computer Errors

Computer-aided-detection (CAD) technology is more effective than humans at finding small dings on a scan that could represent polyps. But there’s a problem. They also identify mock polyps, residual fecal matter clinging to the colon wall, or even artifacts such as hip prosthesis.

The problem is also one of perception. As the technology becomes ever more sophisticated the radiographers using it sometimes ignore the true findings. For example, on occasion a CAD system may detect a true polyp but the radiographer doesn’t think it is one.

Ronald Summers, chief of the NIH Clinical Center’s Clinical Image Processing Service helped develop the CAD system for colorectal screening and wanted to see if he could reduce the errors. To do so he needed to see radiographers use the system, but setting up these kinds of observation studies are time consuming and expensive.

Enter the Crowd

Summers had recently heard about crowdsourcing and decided to see if he could harness the power of people over the internet to improve interpretation of CT scans.

After obtaining approval from the Office of Human Subjects Research, Summers developed a crowdsourcing project and enlisted 228 lay people. He called them knowledge workers (KWs). They were tasked with finding features of polyp candidates in CT colonographic images and were given only a couple minutes of training on what to look for.

Summers was surprised by the results. The crowd, with only a minimal amount of training did just as well as a highly trained CAD system. He carried out further trials, four weeks apart, and achieved the same results.

The cost of these trials was minimal; each one coming in at about $250.

Future Applications

Summers will now use these results to develop training programs that will allow medical staff to more accurately interpret CT colonographic images. He views his use of crowdsourcing as ”a tool for understanding how people think about different types of images, and strategies to improve the performance of experts by learning from non-experts who are more readily available.”

Summers envisages crowdsourcing being able to reduce imaging problems in other medical fields and improve CAD systems for patient diagnosis.

Summers reported his findings in an issue of Radiology (Radiology 262:824–833, 2012). The paper is titled: ‘Distributed Human Intelligence for Colonic Polyp Classification in Computer-aided Detection for CT Colonography’.

Next Story »