Decision Making Robot Transforms its Shape to Tackle Tasks

A team from Cornell University has created a robot that can perceive its surroundings and autonomously transform into different shapes to perform various tasks.

A team from Cornell University has created a robot that can perceive its surroundings and autonomously transform into different shapes to perform various tasks.

The robot is capable of exploring an unknown environment and decide when and how to reconfigure its shape and move objects.

“This is the first time modular robots have been demonstrated with autonomous reconfiguration and behavior that is perception-driven,” said project principal investigator Hadas Kress-Gazit.

"We are creating a modular system that is able to do different tasks autonomously. By changing the high-level task, it totally changes its behavior."

Hadas Kress-Gazit of Cornell University and colleagues describe their work on modular self-reconfigurable robots (MSRR) in a new paper in Science Robotics.

Adaptability

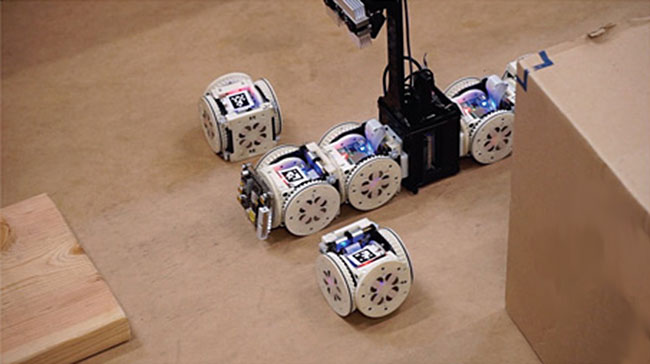

The robot is comprised of cubed-shaped modules on wheels that can form new shapes by attaching and detaching. They use Wi-Fi to communicate with a central system and magnets allow them to attach to each other.

In the paper in Science Robotics the team describes how the robot is able to be so adaptable. The keys are its centralized sensory processing, environmental perception and decision-making software. Currently the droid has a library of 57 possible configurations.

To see the shape-shifting droid in action watch the video below. It highlights three experiments that demonstrate the capabilities of the system. They involve identifying colored and tagged objects in a test area littered with obstacles and moving the objects around.

While the robot did make a number of errors the team state that most were due to low-level hardware issues.

The full results can be read in ‘An integrated system for perception-driven autonomy with modular robots‘ in Science Robotics.

Add your Comment

[LOGIN FIRST] if you're already a member.fields are required.