Google Artificial Intelligence Turns Sign Language into Speech

Google says it has developed algorithms that allow smartphones to interpret and read aloud sign language.

Google says it has developed algorithms that allow smartphones to interpret and read aloud sign language.

Although the tech giant has not developed an application of its own it has published the digital instructions for developers to make their own apps.

These could provide a breakthrough in translating sign language via a smartphone. To date, the endeavors have met with limited success, but Google's approach is an advance in real-time hand tracking courtesy of Google's AI labs.

Technological Advances

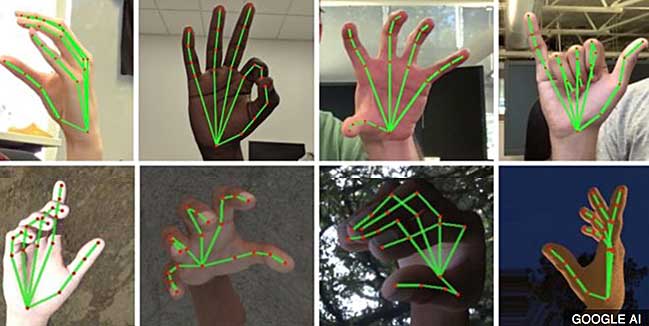

The technology uses some nifty shortcuts that increase the overall efficiency of machine learning systems to produce, in real-time, a very accurate map of the hand and its fingers, using just a smartphone and its camera.

“Whereas current state-of-the-art approaches rely primarily on powerful desktop environments for inference, our method achieves real-time performance on a mobile phone, and even scales to multiple hands,” write Google researchers Valentin Bazarevsky and Fan Zhang in a blog post explaining their approach.

“Robust real-time hand perception is a decidedly challenging computer vision task, as hands often occlude themselves or each other (e.g. finger/palm occlusions and handshakes) and lack high contrast patterns.”

Until now, when trying to track hands-on video, actions such as flicks of the wrist have hidden other parts of the hand which has confused earlier versions of the software.

Google has simplified the process by imposing a graph of 21 points across the fingers, palm and back of the hand. This makes it easier for the software to understand the signal a hand is making.

Welcome Development

Campaigners for the hearing impaired welcome the development although they caution that it does have its limits in that the technology might not fully grasp some conversations. This is because it will miss facial expressions or the speed of signing.

Google admits that progress so far is just a first step but publishing its algorithms could accelerate developments in this field.

Add your Comment

[LOGIN FIRST] if you're already a member.fields are required.